Google announces AI system for diagnostic medical reasoning and conversation

Airbnb in KDD 23', Graph Machine Learning in ICML 2023

Articles

Google wrote an article for AI system for diagnostic medical reasoning and conversation.

The blog post introduces AMIE, a research AI system designed for diagnostic medical reasoning and conversations. This summary delves into the technical details of AMIE's architecture, capabilities, and performance:

1. Architectual Principles:

Multi-turn dialogue management: AMIE utilizes a state-tracking dialogue manager to engage in multi-turn conversations with clinicians and patients, dynamically updating its internal state based on the exchange.

Hierarchical reasoning model: AMIE employs a hierarchical reasoning model encompassing medical knowledge bases, probabilistic reasoning modules, and natural language processing (NLP) components. This model integrates clinical information with patient symptoms and history to formulate diagnoses and propose management plans.

Modular design: AMIE is modular, allowing for independent development and refinement of its various components (e.g., knowledge base, dialogue manager, NLP) while ensuring efficient integration.

2. Capabilities:

Comprehensive medical knowledge base: AMIE leverages a comprehensive knowledge base built from medical textbooks, clinical guidelines, and other sources. This knowledge base includes information on diseases, symptoms,medications, and diagnostic procedures.

Probabilistic reasoning: AMIE employs probabilistic reasoning modules to infer diagnoses from patient data.These modules consider the likelihood of different diagnoses given the observed symptoms and other findings.

Natural language processing: AMIE utilizes NLP techniques for understanding and responding to clinician/patient queries. This includes capabilities for parsing medical terminology, identifying key clinical findings, and generating natural language responses.

Explanation and transparency: AMIE provides explanations for its diagnostic reasoning and management recommendations, promoting transparency and building trust with healthcare professionals.

3. Performance Evaluation:

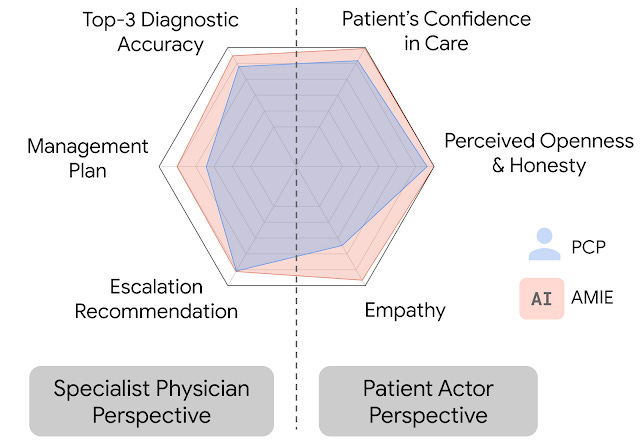

Simulated diagnostic conversations: AMIE's performance was evaluated in simulated diagnostic conversations with both primary care physicians (PCPs) and specialist physicians.

Evaluation metrics: Performance was assessed using various clinically-meaningful metrics, including diagnostic accuracy, completeness of information gathering, efficiency, and patient satisfaction.

Results: AMIE outperformed PCPs on multiple axes of dialogue quality and achieved comparable performance to specialist physicians.

Significance: AMIE's performance demonstrates the potential of AI systems to assist clinicians in diagnostic reasoning and patient communication, potentially improving healthcare outcomes.

4. Open Questions and Future Directions:

Generalizability: While AMIE performed well in simulated settings, further research is needed to evaluate its real-world performance in clinical practice.

Safety and ethical considerations: Ensuring the safety and ethical implications of AI in healthcare remains a crucial area of focus.

Integration with clinical workflows: Seamless integration of AI systems like AMIE into existing clinical workflows is essential for successful adoption.

Airbnb wrote a review article on all of the papers submitted/accepted in KDD 23’ from their recommendations team.

They cover various papers in different categories:

Deep learning and search ranking: One of the challenges in search ranking is that users are searching over a period of days or weeks, and not minutes. Airbnb also has to consider that it is a two-way marketplace, so they need to account for the potential for hosts to cancel the booking. To address these challenges, Airbnb developed Journey Ranker, a new multi-task deep learning model that optimizes for intermediate steps in the user journey, such as reaching positive milestones and avoiding negative milestones.

Online experimentation and measurement: Online experimentation is a common way for companies to make data-driven decisions, but it can be difficult to prove that a change in search UX will drive value when bookings are infrequent and depend on a large number of interactions over a long period of time. Airbnb presented two new methods for variance reduction that rely exclusively on in-experiment data: a model-based leading indicator metric that continually estimates progress toward a delayed binary outcome, and a counterfactual treatment exposure index that quantifies the amount a user is impacted by the treatment.

Causal inference for marketing and user journey optimization: In marketing, one of the challenges is determining how much to spend per channel. This can be reframed as a causal inference problem: how many incremental conversions does each channel drive? Airbnb found that there was moderate to strong correlation across channels, which made it difficult to isolate the impact of one channel from another. They developed a new approach called hierarchical clustering as a novel solution to multicollinearity, which involves hierarchically clustering DMAs based on their similarity in marketing impressions over time. This reduced cross-channel correlation by up to 43% and made it possible to isolate the impact of each channel.

Data science and analytics infra: Airbnb shared their solution for data science reproducibility and reuse, called Onebrain. Onebrain is a coding standard for configuring data science projects entirely in YAML. Onebrain's backend abstracts away CI/CD, configuration/dependency management, and command-line parsing. Airbnb also discussed a new type of client-side event called Sessions, which can be used to track user context and behaviors within the Airbnb product.Sessions are more flexible than traditional time-based sessions used in web analytics, and they can be tied to specific surfaces like the checkout page, API calls used for observability, or even internal states of the app.

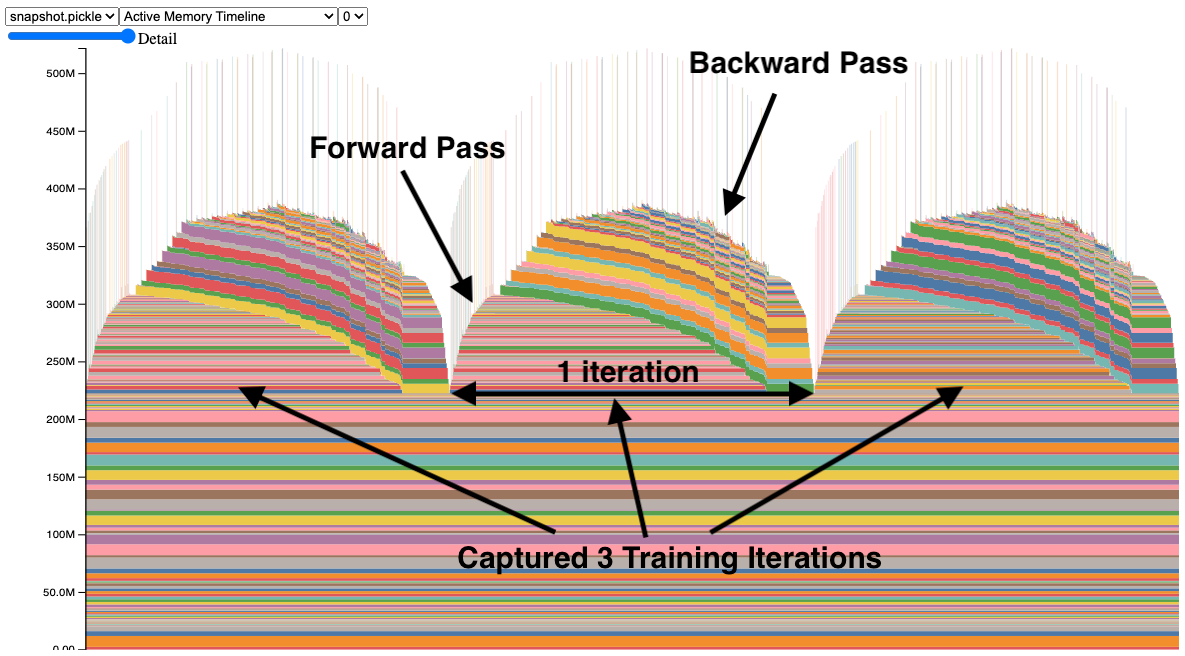

PyTorch wrote a rather very useful blog post on how to investigate memory issues in PyTorch models.

In order to identify the culprit, some of the tools that can be used:

Memory Snapshot: This tool provides a fine-grained visualization of memory allocations on the GPU at any given point in your PyTorch script. It can pinpoint the specific tensors and operations consuming the most memory,highlighting potential culprits like large intermediate activations or persistent gradients.

Memory Profiler: This tool tracks memory allocations and deallocations over time throughout your script execution. It reveals patterns and tendencies in memory usage, helping you identify if memory increases steadily or spikes at specific points, guiding your investigation.

Common Memory Leaks:

Persistent Gradients: Gradients calculated during backpropagation can accumulate in memory if not explicitly cleared. Adding

optimizer.zero_grad()after each optimization step ensures these gradients are released.Unneeded Tensors: Tensors created for temporary calculations might not be deallocated automatically. Using techniques like

with torch.no_grad()or assigningNoneto unused tensors can explicitly free their memory.Model Complexity: Large models with a high number of parameters inherently demand more memory. Consider model pruning, quantization, or gradient checkpointing to reduce memory footprint without sacrificing accuracy.

Data Issues: Large input tensors or datasets can contribute to memory pressure. Consider pre-processing, chunking,or reducing the dimensionality of your data to lower its memory footprint.

Some of the Advanced Techniques that can be used:

Automatic Mixed Precision (AMP): AMP allows using a mix of data types (e.g., float16 and float32) during training, reducing memory requirements by up to half while maintaining accuracy.

Gradient Accumulation: This technique accumulates gradients over multiple mini-batches before performing an optimization step, reducing the frequency of backpropagation and memory spikes.

Gradient Checkpointing: This technique saves and restores intermediate activations during backpropagation,reducing memory usage and potentially enabling larger models on limited GPUs.

Graph Machine Learning ICML 2023 written by Intel shows a good overview of all of the Graph related papers in the conference.

1. Graph Transformers:

Exphormer:

Introduces a novel sparse attention mechanism based on exponentially decaying kernels.

Achieves superior accuracy and efficiency compared to GraphGPS, especially for large graphs.

Employs an efficient "lazy update" scheme to dynamically adapt attention weights.

2. Geometric Learning:

Virtual Nodes in GCNs:

Introduces the concept of virtual nodes to inject arbitrary features into GCNs.

Enables GCNs to approximate linear attention, leading to more expressive representations.

Offers greater flexibility and adaptability compared to standard GCNs.

3. Generative Models:

GeoLDM:

A diffusion model specifically designed for generating 3D molecules.

Utilizes geometric properties like atom types and bond angles during the diffusion process.

Produces realistic and diverse molecules with properties akin to real-world counterparts.

Additional Technical Points:

Equivariant Models: The article emphasizes the importance of equivariant models for tasks involving graph symmetries. Equivariant models ensure outputs remain consistent under graph transformations.

Tensor Order & Body Order: The article highlights the significance of tensor order and body order in feature representation. Spherical and many-body interactions tend to be more expressive than scalars and pairwise distances.

Geometric Clifford Algebra Networks (GCANs): Going beyond vectors, GCANs leverage Clifford algebras to capture higher-order relationships and symmetries in graphs, potentially leading to more powerful representations.

Libraries

Decision-making involves understanding how different variables affect each other and predicting the outcome when some of them are changed to new values. For instance, given an outcome variable, one may be interested in determining how a potential action(s) may affect it, understanding what led to its current value, or simulate what would happen if some variables are changed. Answering such questions requires causal reasoning. DoWhy is a Python library that guides you through the various steps of causal reasoning and provides a unified interface for answering causal questions.

DoWhy provides a wide variety of algorithms for effect estimation, prediction, quantification of causal influences, diagnosis of causal structures, root cause analysis, interventions and counterfactuals. A key feature of DoWhy is its refutation and falsification API that can test causal assumptions for any estimation method, thus making inference more robust and accessible to non-experts.

StableHLO is an operation set for high-level operations (HLO) in machine learning (ML) models. Essentially, it's a portability layer between different ML frameworks and ML compilers: ML frameworks that produce StableHLO programs are compatible with ML compilers that consume StableHLO programs.

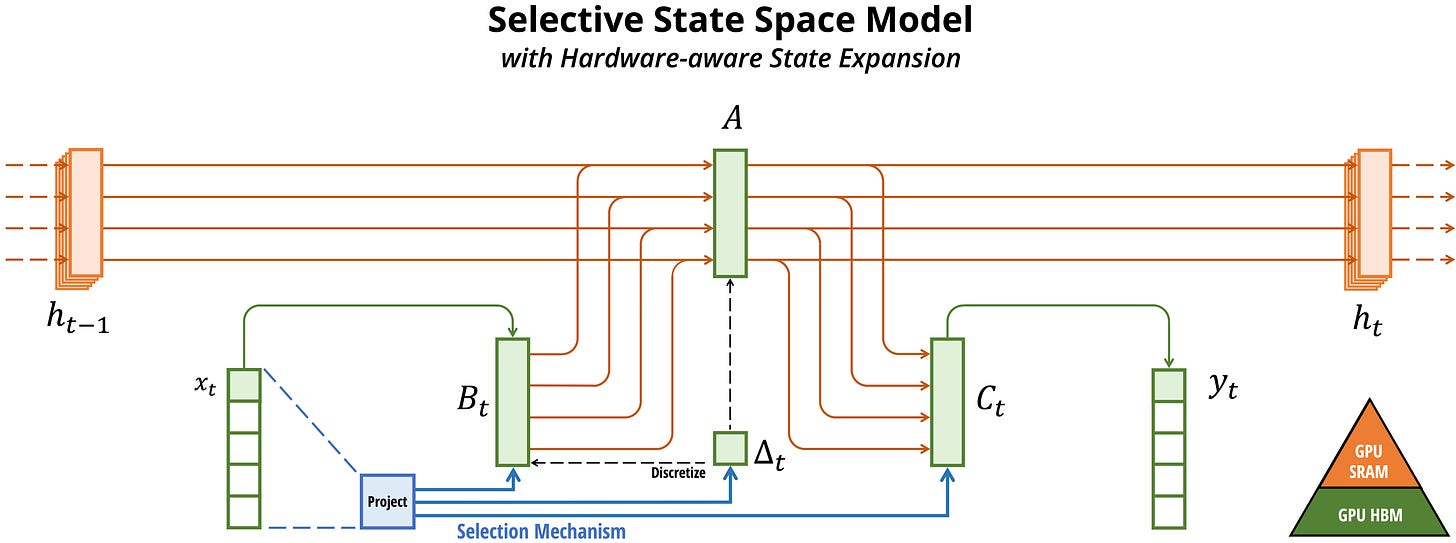

Mamba is a new state space model architecture showing promising performance on information-dense data such as language modeling, where previous subquadratic models fall short of Transformers. It is based on the line of progress on structured state space models, with an efficient hardware-aware design and implementation in the spirit of FlashAttention.

mamba-minimal is a simple, minimal implementation of Mamba in one file of PyTorch.

Featuring:

Equivalent numerical output as official implementation for both forward and backward pass

Simplified, readable, annotated code

Does NOT include:

Speed. The official implementation is heavily optimized, and these optimizations are core contributions of the Mamba paper. I kept most implementations simple for readability.

Proper parameter initialization (though this could be added without sacrificing readability)

Intel® Extension for Transformers is an innovative toolkit designed to accelerate GenAI/LLM everywhere with the optimal performance of Transformer-based models on various Intel platforms, including Intel Gaudi2, Intel CPU, and Intel GPU. The toolkit provides the below key features and examples:

Seamless user experience of model compressions on Transformer-based models by extending Hugging Face transformers APIs and leveraging Intel® Neural Compressor

Advanced software optimizations and unique compression-aware runtime (released with NeurIPS 2022's paper Fast Distilbert on CPUs and QuaLA-MiniLM: a Quantized Length Adaptive MiniLM, and NeurIPS 2021's paper Prune Once for All: Sparse Pre-Trained Language Models)

Optimized Transformer-based model packages such as Stable Diffusion, GPT-J-6B, GPT-NEOX, BLOOM-176B, T5, Flan-T5, and end-to-end workflows such as SetFit-based text classification and document level sentiment analysis (DLSA)

llama2.rs is a Rust implementation of Llama2 inference on CPU

The goal is to be as fast as possible.

It has the following features:

Support for 4-bit GPT-Q Quantization

Batched prefill of prompt tokens

SIMD support for fast CPU inference

Memory mapping, loads 70B instantly.

Static size checks for safety

Support for Grouped Query Attention (needed for big Llamas)

Python calling API