Meet PowerInfer: A Fast Large Language Model (LLM) on a Single Consumer-Grade GPU that Speeds up Machine Learning Model Inference By 11 Times

Marktechpost

DECEMBER 23, 2023

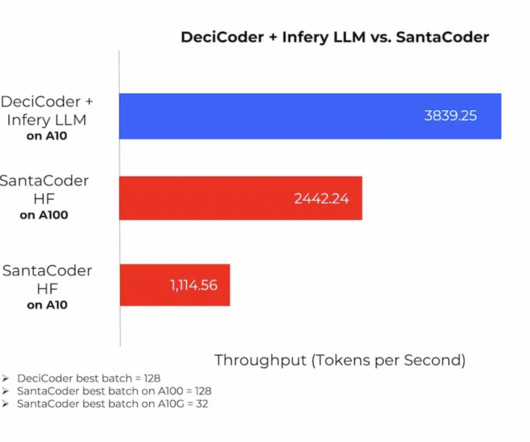

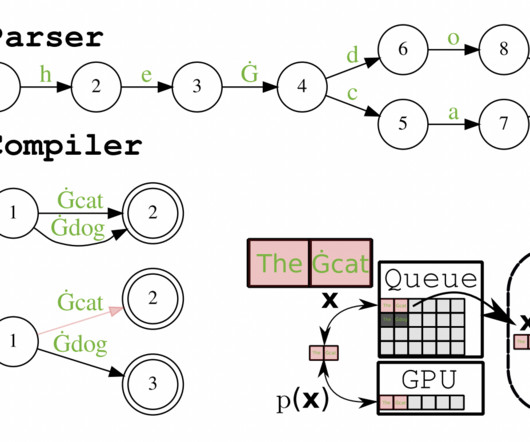

In a recent study, a team of researchers presented PowerInfer, an effective LLM inference system designed for local deployments using a single consumer-grade GPU. The team has shared that PowerInfer is a GPU-CPU hybrid inference engine that makes use of this understanding. Check out the Paper and Github.

Let's personalize your content