AI for Money Managers: Avoid the Black Box – And Do This Instead

Unite.AI

MARCH 14, 2024

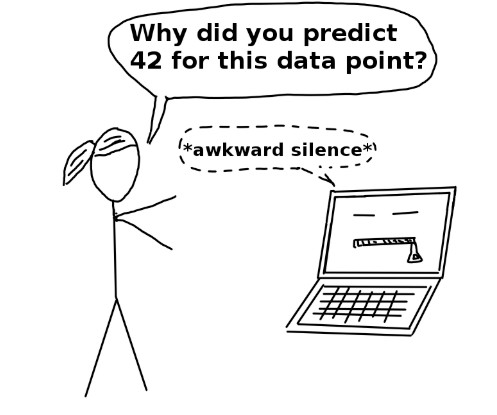

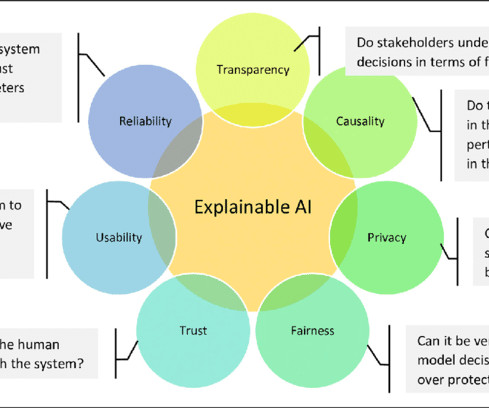

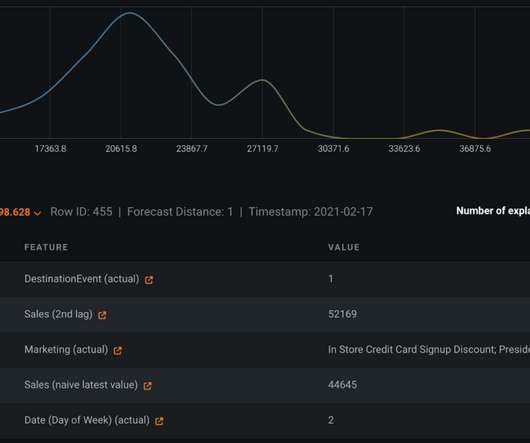

The opportunities afforded by AI are truly significant – but can we trust black box AI to produce the right results? Instead of utilizing AI systems that they cannot explain – black box AI systems – they could utilize AI platforms that use transparent techniques , explaining how they arrive at their conclusions.

Let's personalize your content