Introduction to Large Language Models (LLMs): An Overview of BERT, GPT, and Other Popular Models

John Snow Labs

JUNE 27, 2023

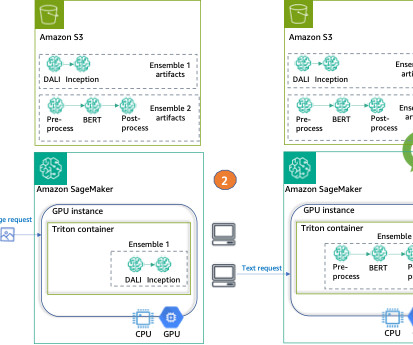

At their core, LLMs are built upon deep neural networks, enabling them to process vast amounts of text and learn complex patterns. In this section, we will provide an overview of two widely recognized LLMs, BERT and GPT, and introduce other notable models like T5, Pythia, Dolly, Bloom, Falcon, StarCoder, Orca, LLAMA, and Vicuna.

Let's personalize your content