Is There a Library for Cleaning Data before Tokenization? Meet the Unstructured Library for Seamless Pre-Tokenization Cleaning

Marktechpost

MAY 9, 2024

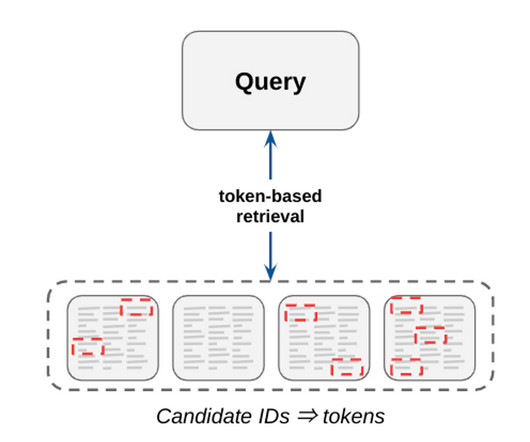

In Natural Language Processing (NLP) tasks, data cleaning is an essential step before tokenization, particularly when working with text data that contains unusual word separations such as underscores, slashes, or other symbols in place of spaces. The post Is There a Library for Cleaning Data before Tokenization?

Let's personalize your content