The Full Story of Large Language Models and RLHF

AssemblyAI

MAY 3, 2023

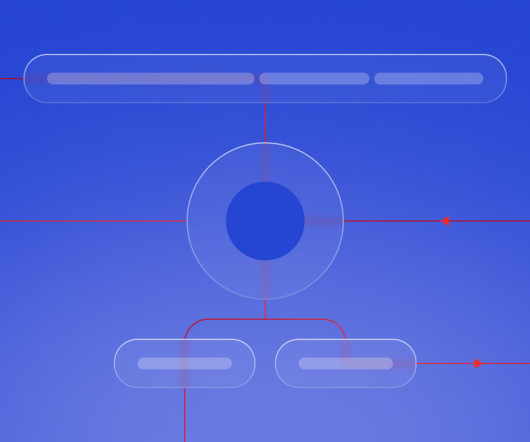

In the past months, an exquisitely human-centric approach called Reinforcement Learning from Human Feedback (RLHF) has rapidly emerged as a tour de force in the realm of AI alignment. What is the learning process of a language model? What is RLHF and how to make language models more aligned with human values?

Let's personalize your content