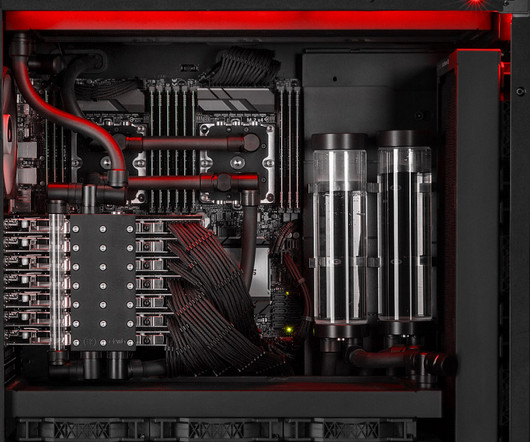

'Big Boss' Workstation Debuts With 7 RTX 4090 GPUs, $31k Price Tag

Extreme Tech

AUGUST 22, 2023

This European mega machine will easily shred any workload.

This site uses cookies to improve your experience. By viewing our content, you are accepting the use of cookies. To help us insure we adhere to various privacy regulations, please select your country/region of residence. If you do not select a country we will assume you are from the United States. View our privacy policy and terms of use.

tag gpus

tag gpus

Extreme Tech

AUGUST 22, 2023

This European mega machine will easily shred any workload.

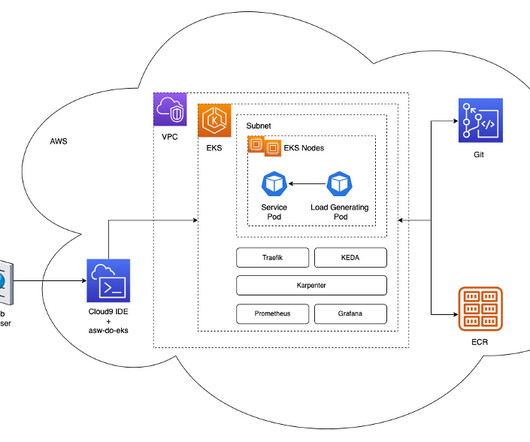

AWS Machine Learning Blog

APRIL 19, 2024

Initially, our model fine-tuning took hours of CPU time, so a framework for scaling model fine-tuning on GPUs was imperative. Our deep learning models have non-trivial requirements: they are gigabytes in size, are numerous and heterogeneous, and require GPUs for fast inference and fine-tuning. Karpenter adds g4dn.metal and g4dn.12xlarge

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

Generative AI Deep Dive: Advancing from Proof of Concept to Production

Understanding User Needs and Satisfying Them

Leading the Development of Profitable and Sustainable Products

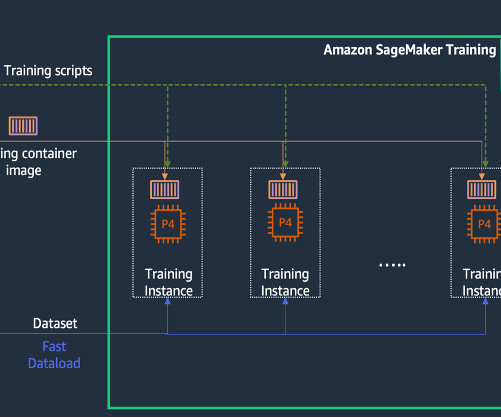

AWS Machine Learning Blog

NOVEMBER 20, 2023

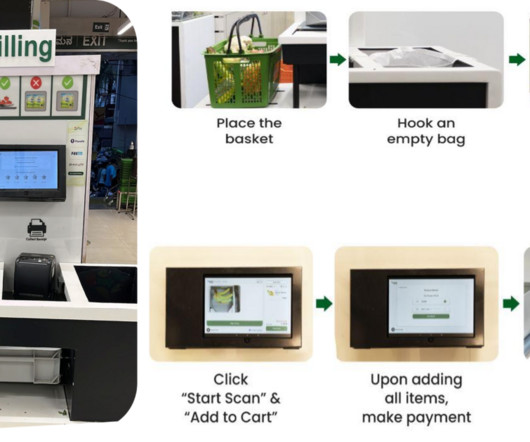

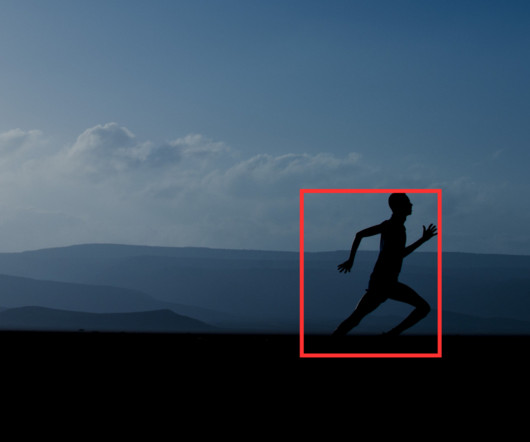

KT’s AI Food Tag is an AI-based dietary management solution that identifies the type and nutritional content of food in photos using a computer vision model. The AI Food Tag can help patients with chronic diseases such as diabetes manage their diets. In this post, we describe KT’s model development journey and success using SageMaker.

Marktechpost

MARCH 30, 2024

The Core of Pollen-Vision At the heart of Pollen-Vision are several pivotal models, each selected for its zero-shot capability and real-time performance on consumer-grade GPUs. Core models within Pollen-Vision, such as OWL-VIT, Mobile Sam, and RAM, offer diverse capabilities from object localization to image segmentation and tagging.

NVIDIA

SEPTEMBER 21, 2023

The NVIDIA Studio laptop lineup is expanding with the new Microsoft Surface Laptop Studio 2, powered by GeForce RTX 4060 , GeForce RTX 4050 or NVIDIA RTX 2000 Ada Generation Laptop GPUs, providing powerful performance and versatility for creators. faster on GeForce RTX and NVIDIA RTX GPUs compared to Macs.

NVIDIA

JULY 6, 2023

link] In addition, get a glimpse of two cloud-based AI apps, Wondershare Filmora and Trimble SketchUp Go, powered by NVIDIA RTX GPUs, and learn how they can elevate and automate content creation. Those who own NVIDIA or GeForce RTX GPUs can take advantage of Tensor Cores that utilize AI to accelerate over 100 apps.

NVIDIA

JANUARY 16, 2024

Daz features an AI denoiser for high-performance interactive rendering that can also be accelerated by RTX GPUs. Here, Brellias’ RTX GPU accelerated the color grading, video editing and color scoping processes, dramatically speeding his creative workflow.

OCTOBER 9, 2023

Additionally, the -t (or --tag ) flag is used to give a nametag to your image. Using the -t flag allows you to tag your build with a name that can be used to reference it later. Using the --gpus flag allows you to pass which GPUs on the local machine should be available to the container. Example: docker build. -t

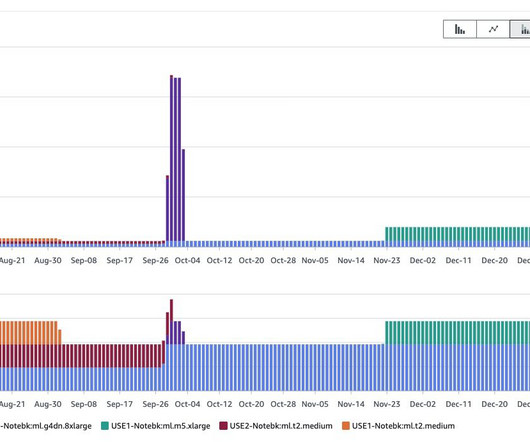

AWS Machine Learning Blog

MAY 30, 2023

You can also apply additional filters such as account number, Amazon Elastic Compute Cloud (Amazon EC2) instance type, cost allocation tag, Region, and more. You can also include cost-allocation tags in your query for an additional level of granularity. You can build custom queries to look up AWS CUR data using standard SQL.

NVIDIA

DECEMBER 5, 2023

Be sure to tag #WinterArtChallenge to join. Share your winter-themed art (like this incredible one created on an RTX GPU by @rafianimates ) using the hashtag for a chance to be featured on our social channels! Winter has returned and so has our #WinterArtChallenge !

Mlearning.ai

MAY 5, 2023

This includes things like text preprocessing, part-of-speech tagging, parsing, and sentiment analysis. GPU Computing Skills : LLMs typically require a lot of computational resources, so it’s essential to have experience with GPU computing.

Unite.AI

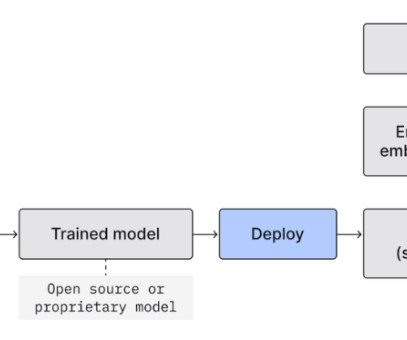

OCTOBER 16, 2023

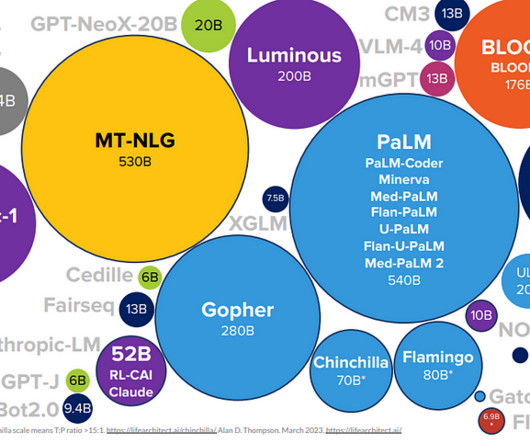

The roadmap to LLM integration have three predominant routes: Prompting General-Purpose LLMs : Models like ChatGPT and Bard offer a low threshold for adoption with minimal upfront costs, albeit with a potential price tag in the long haul.

AWS Machine Learning Blog

FEBRUARY 13, 2024

How the SMDDP library helped reduce training time, cost, and complexity In traditional distributed data training, the training framework assigns ranks to GPUs (workers) and creates a replica of your model on each GPU.

Topbots

MARCH 31, 2024

Yet, TSMC distinguishes itself even further as the sole entity capable of reliably producing the most advanced chips, such as Nvidia’s H100 GPUs, which are set to power the next generation of AI technologies. This level of investment has enabled TSMC to create a virtuous cycle of technological advancement and financial return.

AssemblyAI

JANUARY 19, 2023

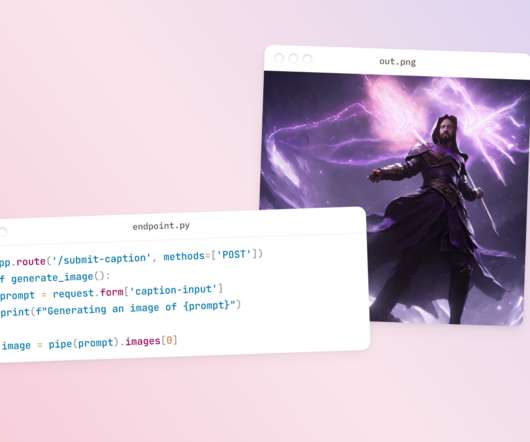

Stable Diffusion allows you to create incredible images like the one below with only a sentence; but it requires a GPU to run in a reasonable amount of time. Since GPUs are expensive and in short supply, many users opt to instead pay for credits in a web app like DreamStudio in order to use Stable Diffusion in the cloud.

Mlearning.ai

FEBRUARY 6, 2023

If there are multiple GPUs on the selected instance we will use each GPU for inference on each file in parallel. ENV PYTHONUNBUFFERED=TRUE ENV PYTHONDONTWRITEBYTECODE=TRUE Once we have the DOCKER file we need to build it to create an image and tag that image before we push it to Amazon ECR. docker build -t ${algorithm_name}.

Bugra Akyildiz

MAY 12, 2024

For example, when running the Llama-2 70B model on 4 A100x80GB GPUs, DeepSpeed-FastGen achieves up to 2 times higher throughput compared to vLLM. These techniques include: Tensor Parallelism DeepSpeed-FastGen leverages tensor parallelism, a technique that distributes the computational load across multiple GPUs or accelerators.

Unite.AI

JULY 27, 2023

Due to the substantial computing power required, especially from the graphics processing units (GPUs) and video memory usage for the denoising process, Mid-Journey's service comes with a price tag. hours of GPU time, enough for approximately 200 image generations. Keep track of your GPU usage by using the /info command.

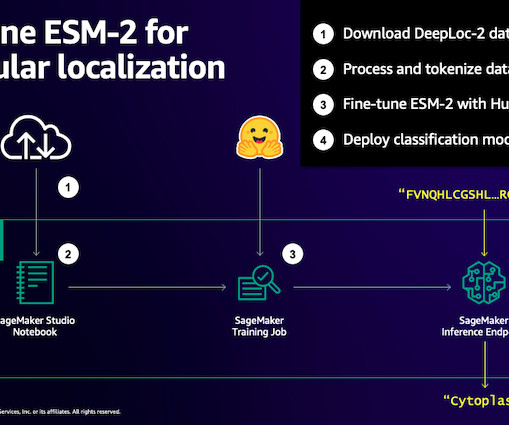

AWS Machine Learning Blog

MARCH 6, 2024

It also means that you need to use hardware, especially GPUs, with large amounts of memory to store the model parameters. For example, in 2023, a research team described training a 100 billion-parameter pLM on 768 A100 GPUs for 164 days!

IBM Journey to AI blog

SEPTEMBER 20, 2023

On the other hand, tuning of these base FMs for downstream tasks—which only require a few tens or hundreds of labeled data samples and inference serving—can be accomplished with only a few GPUs at the enterprise edge.

AWS Machine Learning Blog

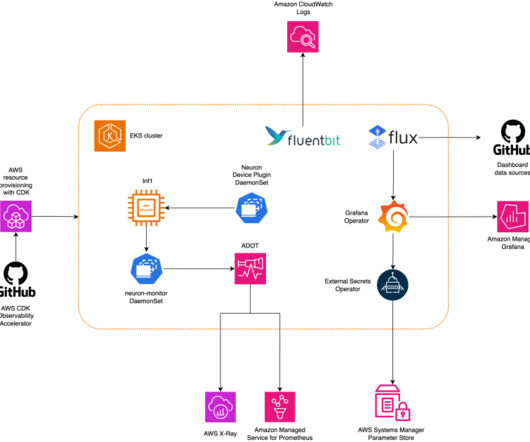

APRIL 17, 2024

Despite the availability of advanced distributed training libraries, it’s common for training and inference jobs to need hundreds of accelerators (GPUs or purpose-built ML chips such as AWS Trainium and AWS Inferentia ), and therefore tens or hundreds of instances. or later NPM version 10.0.0

AWS Machine Learning Blog

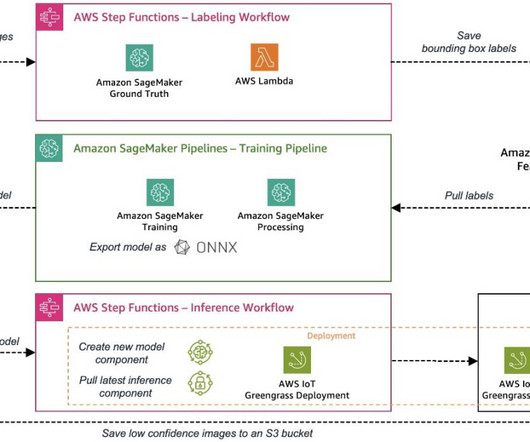

OCTOBER 2, 2023

The sample use case used for this series is a visual quality inspection solution that can detect defects on metal tags, which you can deploy as part of a manufacturing process. Prepare Edge devices often come with limited compute and memory compared to a cloud environment where powerful CPUs and GPUs can run ML models easily.

AWS Machine Learning Blog

APRIL 4, 2024

The following figure shows an example of our tagging system. Improved infrastructure – With SageMaker, we upgraded our existing infrastructure, and we are now using newer AWS instances with newer GPUs such as g5.xlarge. For example, we identify if the brand is on a banner or a shirt.

APRIL 17, 2023

And don’t forget to share your stunning convergence plots on Twitter, tagging us for a chance to win a surprise! To overcome this problem, we use GPUs. The problem is these GPUs are expensive and become outdated quickly. GPUs are great because they take your Neural Network and train it quickly. What's next?

Artificial Corner

AUGUST 29, 2023

Spark conversations through the cloud with serverless GPUs powering your most advanced Hugging Face large language models. In fact securing access to GPUs requires a lot of upfront investment and technical overhead. In fact securing access to GPUs requires a lot of upfront investment and technical overhead.

AWS Machine Learning Blog

NOVEMBER 1, 2023

f Dockerfile -t ${container_name} docker tag ${container_name} ${full_name} docker push ${full_name} LLM inference with TGI The VLP solution in this post employs the LLM in tandem with LangChain, harnessing the chain-of-thought (CoT) approach for more accurate intent classification.

NVIDIA

JULY 11, 2023

Join by show us a photo/video of how one of your art projects started and then one of the final result + tag #StartToFinish. So it was a no-brainer for us to use NVIDIA RTX GPUs.” Welcome to our new #StartToFinish challenge. Check out these great examples from @rafianimates made with #OpenUSD in @NVIDIAOmniverse. Traveling soon?

The MLOps Blog

JUNE 27, 2023

You can use it to speed up the inference of deep learning models on NVIDIA GPUs. TensorRT can optimize deep learning models for inference on NVIDIA GPUs, which can lead to significant performance improvements. optimizes and orchestrates GPU compute resources for AI and deep learning workloads. Reduce the size of their models.

NVIDIA

JULY 13, 2023

It uses GPUs, DPUs and networking along with CPUs to accelerate applications across science, analytics, engineering, as well as consumer and enterprise use cases. And the impact of AI adoption could be greater than the inventions of the internet, mobile broadband and the smartphone — combined. In the U.S.,

AWS Machine Learning Blog

MAY 25, 2023

We need access to accelerated instances (GPUs) for hosting the LLMs. Prerequisites To implement the solution provided in this post, you should have an AWS account and familiarity with LLMs, OpenSearch Service and SageMaker.

Viso.ai

FEBRUARY 7, 2024

Action recognition is typically applied in scenarios where understanding the overall action category is enough, such as video tagging, content-based video retrieval, and activity monitoring. This is done without providing temporal localization information. Benefits of YOWO YOWO boasts a unique architecture and improved action localization.

Marek Rei

JANUARY 17, 2018

link] They incorporate fMRI features into POS tagging, under the assumption that reading semantically/functionally different words will activate the brain in different ways. There does not seem to be any auxiliary task that would help on all main tasks, but chunking and semantic tagging seem to perform best. Copenhagen. Copenhagen.

AWS Machine Learning Blog

JANUARY 9, 2023

To meet the latency and throughput goals of ML applications, GPU instances are preferred over CPU instances (given the computational power GPUs offer). With MME support for GPU, you can deploy thousands of deep learning models behind one SageMaker endpoint.

Snorkel AI

APRIL 11, 2023

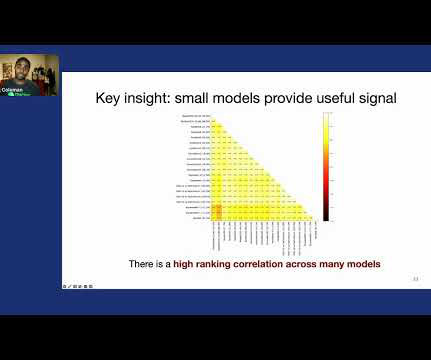

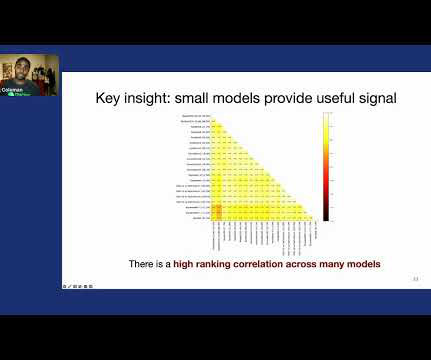

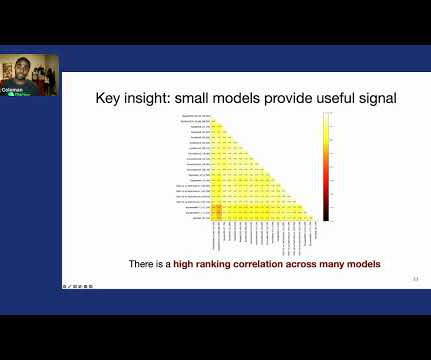

And then on Amazon reviews, with several million reviews, we actually take a very small, shallow model, fastText–which can be trained in a matter of minutes on the laptop–to select data for a much larger model, VDCNN29, which takes close to 16 hours to train on GPUs. So where might we have these large label datasets?

Snorkel AI

APRIL 11, 2023

And then on Amazon reviews, with several million reviews, we actually take a very small, shallow model, fastText–which can be trained in a matter of minutes on the laptop–to select data for a much larger model, VDCNN29, which takes close to 16 hours to train on GPUs. So where might we have these large label datasets?

Snorkel AI

APRIL 11, 2023

And then on Amazon reviews, with several million reviews, we actually take a very small, shallow model, fastText–which can be trained in a matter of minutes on the laptop–to select data for a much larger model, VDCNN29, which takes close to 16 hours to train on GPUs. So where might we have these large label datasets?

John Snow Labs

JULY 19, 2023

Despite the higher price tag, the versatility, advanced capabilities, and unique features of Claude Instant and Claude-v1 demonstrate their value proposition in the rapidly evolving LLM landscape. These numbers underscore Cerebras’ competitive edge in the LLM landscape in terms of both efficiency and cost-effectiveness.

Explosion

AUGUST 1, 2019

The doc.tensor attribute gives you one row per spaCy token, which is useful if you’re working on token-level tasks such as part-of-speech tagging or spelling correction. Multiple GPUs are also not currently supported. This variable gives you a tensor with one row per wordpiece token. We are working on both of these issues.

The MLOps Blog

OCTOBER 3, 2023

The layer above that is where you have the providers or, for a lot of folks – if you’re a solo data scientist, for example –maybe you just need access to GPUs for machine learning models. They just need to kind of tag things like “Hey, by the way, we’re using these data sources. We’re creating these features.

Lexalytics

APRIL 5, 2021

This success came from the efficient use of GPUs, [rectified linear units], a new regularization technique called dropout, and techniques to generate more training examples by deforming the existing ones. Manning and Yoram Singer (2003) “ Feature-rich part-of-speech tagging with a cyclic dependency network ” David G.

Expert insights. Personalized for you.

We have resent the email to

Are you sure you want to cancel your subscriptions?

Let's personalize your content