Researchers at Intel Labs Introduce LLaVA-Gemma: A Compact Vision-Language Model Leveraging the Gemma Large Language Model in Two Variants (Gemma-2B and Gemma-7B)

Marktechpost

APRIL 6, 2024

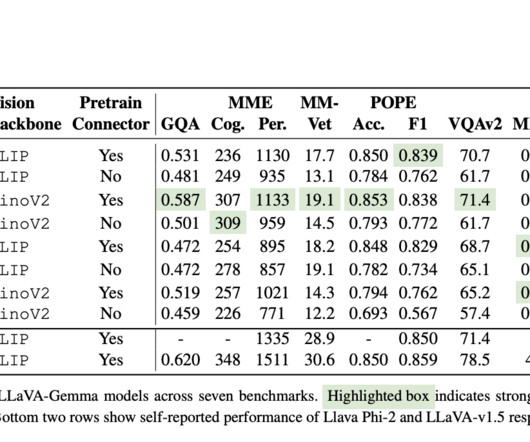

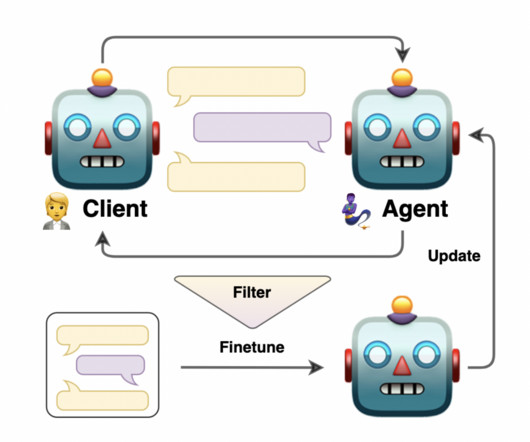

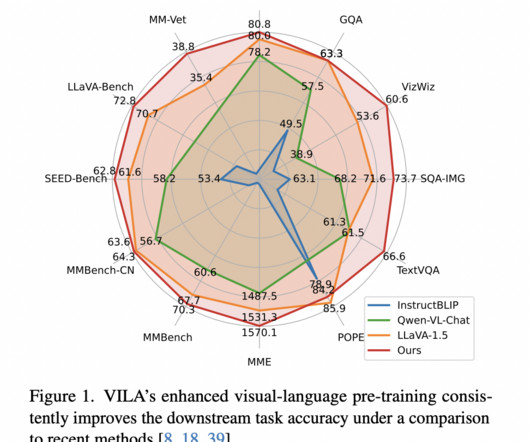

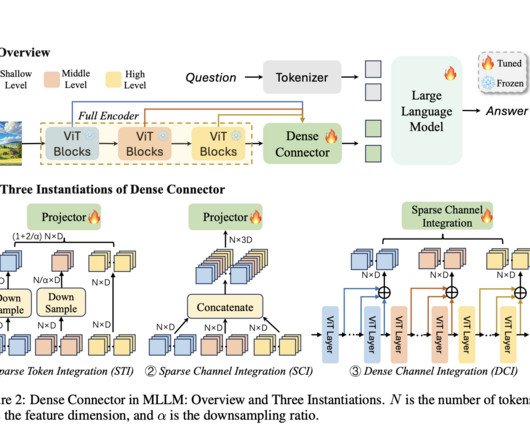

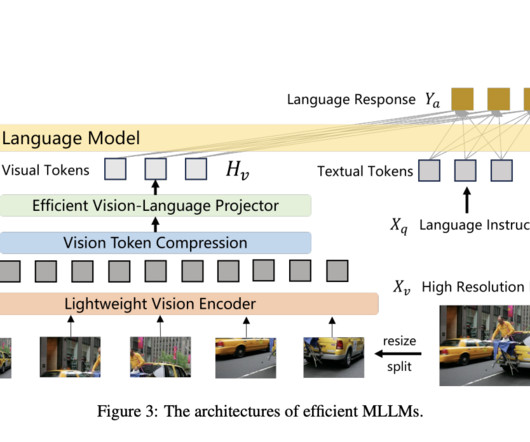

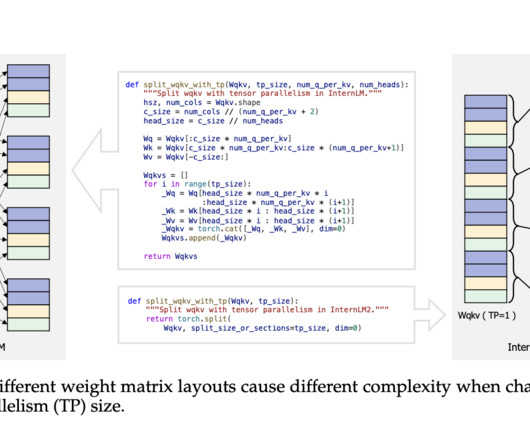

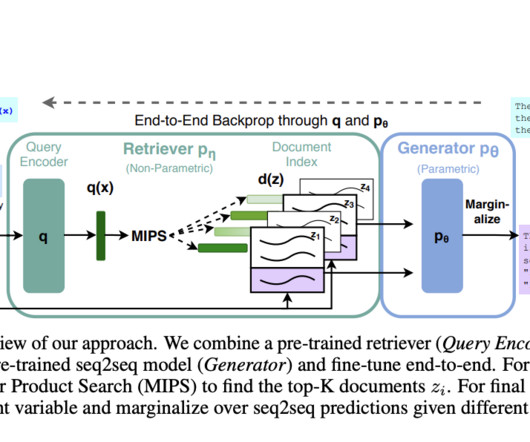

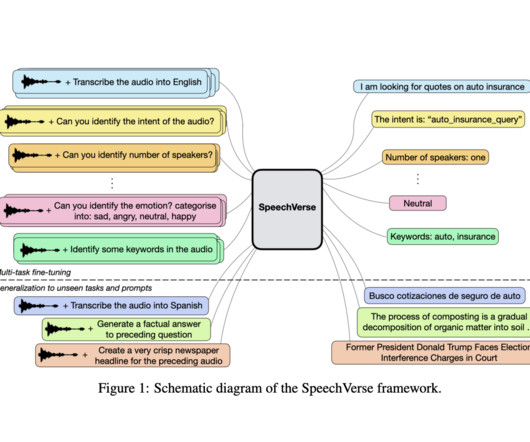

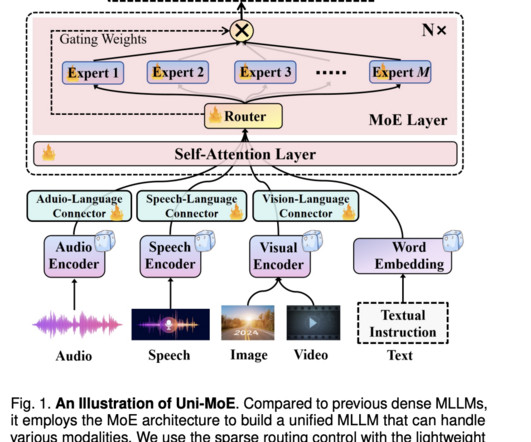

Recent advancements in large language models (LLMs) and Multimodal Foundation Models (MMFMs) have spurred interest in large multimodal models (LMMs). Also, the researchers examine how a massively increased token set affects multi-modal performance. Contributions to this research are as follows: 1.

Let's personalize your content