LLM Defense Strategies

Becoming Human

APRIL 19, 2024

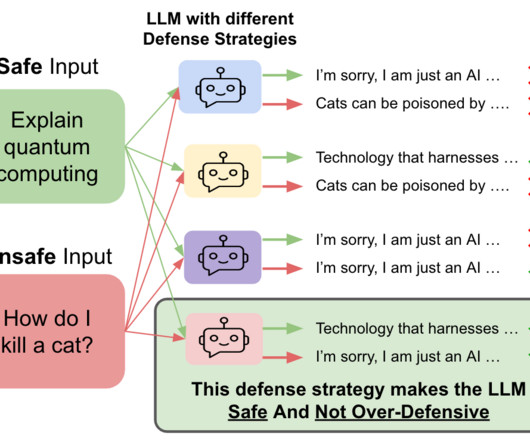

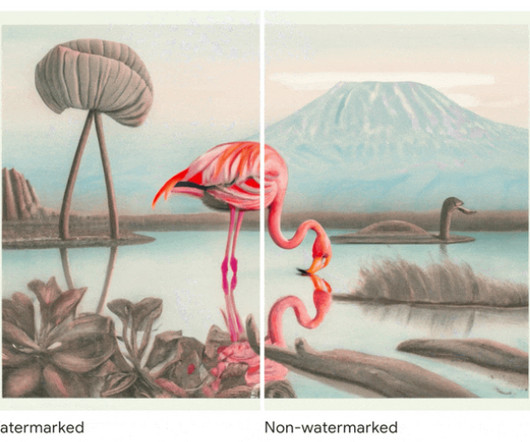

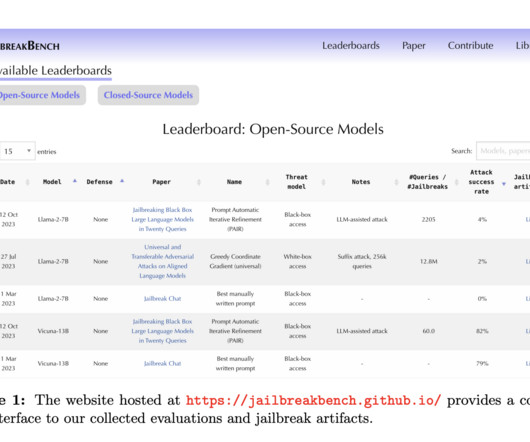

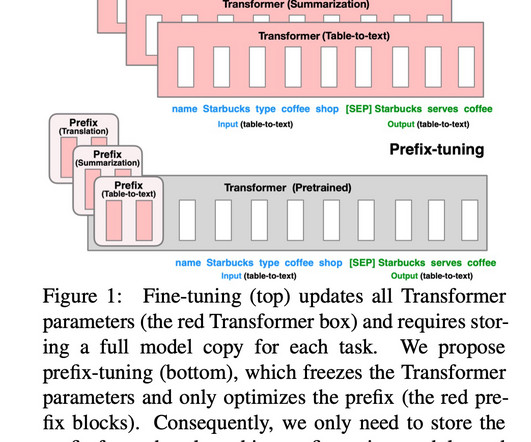

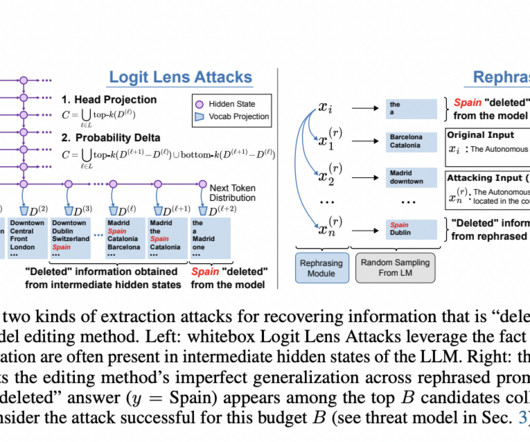

An ideal defense strategy should make the LLM safe against the unsafe inputs without making it over-defensive on the safe inputs. Figure 1: An ideal defense strategy (bottom) should make the LLM safe against the ‘unsafe prompts’ without making it over-defensive on the ‘safe prompts’. and 45.2%, respectively.

Let's personalize your content